GANs for Dynamic Spatio-Temporal Patterns

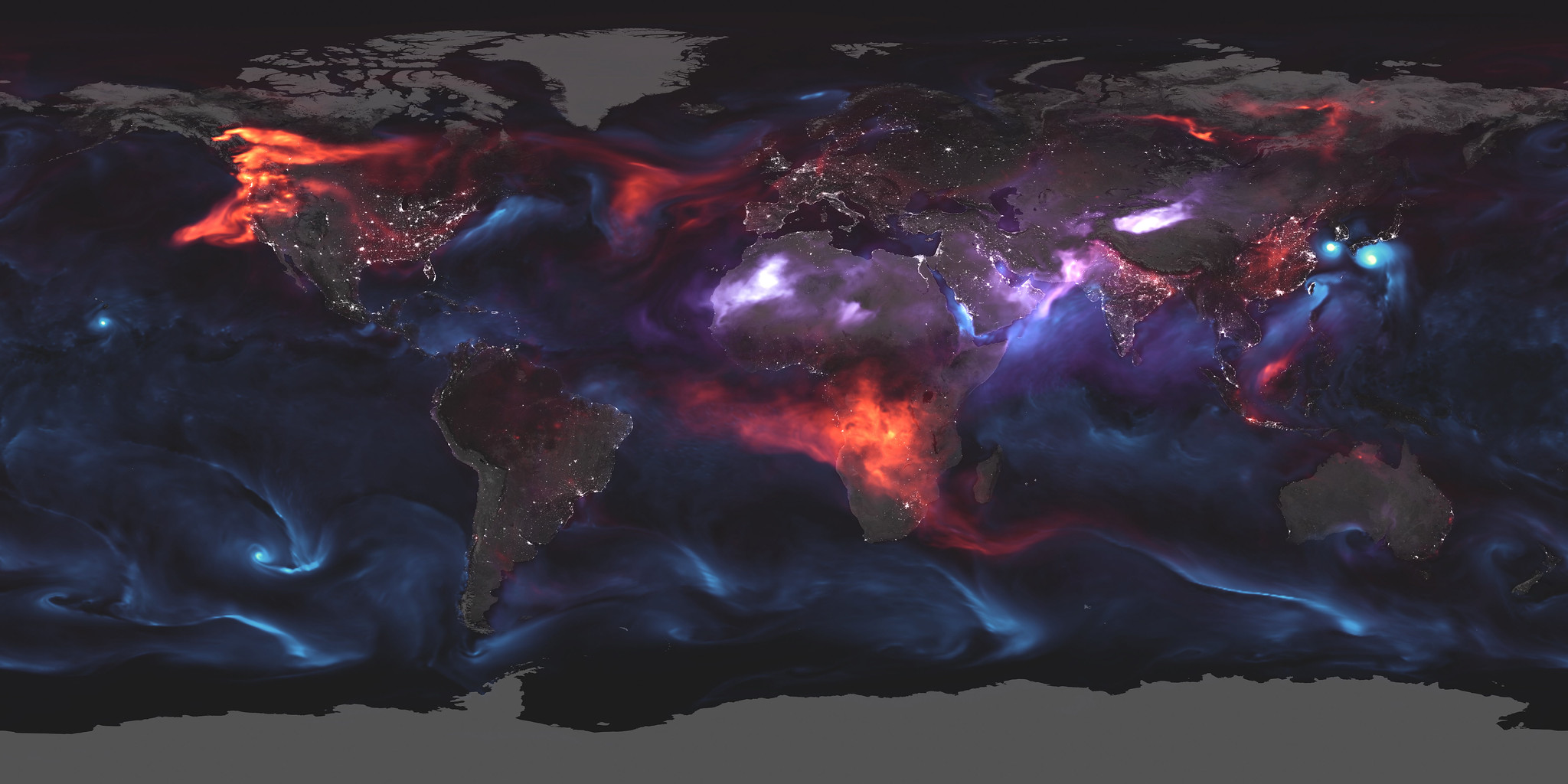

Deep generative modeling can help to accelerate and scale simulation of weather patterns and turbulent flows.

As our climate changes, tools for understanding and modeling our planet are becoming more important than ever. Simulating Earth systems and geophysical processes is crucial for a myriad of tasks, from modeling coastal areas for flood prevention to understanding climate dynamics through turbulent flow modeling. But granular models of our planet are difficult to tune and often rely on computationally expensive numerical simulations.

One promising approach is to apply machine learning, which can model complex, non-linear data at scale. ML is especially appealing given the increasing availability of near real-time Earth observation data, collected by fleets of satellites and pervasive mobile sensing infrastructure on the ground. These rich data sources, together with powerful neural network models, could help us simulate ocean dynamics and forecast extreme weather events.

However, recent research shows that traditional deep learning approaches might not be equipped to handle the intricate spatial, temporal, and spatio-temporal complexities inherent to these data. Earth systems are governed by physical laws manifesting over space and time, and neural networks have no built-in intuition for those. To overcome these issues, as the authors note, it is crucial to augment deep learning approaches with domain expertise from the geospatial sciences.

This call-to-action catalyzed a growing interest in physics-informed deep learning, in which (geo)-physical constraints are integrated directly into models. My colleagues and I recently proposed such a method for modeling spatial dependencies: we give the neural networks additional training on auxiliary tasks that involve predicting measures of spatial autocorrelation. The intuition is that by providing models with explicit information on how spatial dependencies manifest in the data, we can nudge them to learn these processes during training.

In our latest paper, we expand this idea to the spatio-temporal domain. But how can we measure spatio-temporal autocorrelation? A look into geography can help! This field has a long tradition of dealing with the complexities of geospatial data.

Geographers have developed metrics like the Moran’s I statistic to empirically assess spatial autocorrelation, which describes an observation’s dependency on its local spatial neighborhood. We can turn Moran’s I into a new measure for spatio-temporal autocorrelation by making one small tweak: Moran’s I averages differences between grid cells over space. We replace those computations with averages over space and time. We refer to this new measure of spatio-temporal association as SPATE.

Specifically, we devise three variants of SPATE that compute these space-time expectations in different ways: (1) without temporal weighting and with access to future time-steps; (2) with temporal weighting and with access to future time-steps; and (3) with temporal weighting and without access to future time-steps.

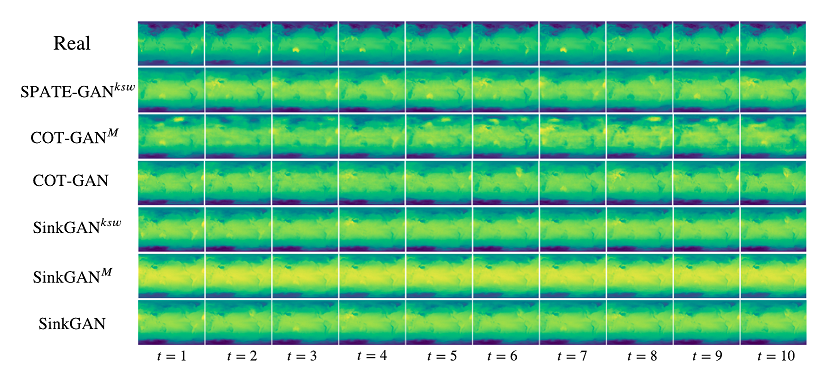

We then deploy SPATE in a generative adversarial network (GAN) application. GANs learn the data-generating process of the training data and can generate high-quality synthetic data samples. My team and I propose SPATE-GAN, a GAN augmented with our new SPATE metric. We accomplish this by setting up the loss function during training to reward the model for generating synthetic data that reproduces observed spatio-temporal dynamics.

We test SPATE-GAN on three relevant real-world problems: spatio-temporal point processes (used for, e.g., modeling disease spread), simulating surface temperature, and simulating turbulent flows (e.g., atmospheric and ocean currents). All these tasks exhibit intricate spatio-temporal patterns governed by complex physical laws. And compared to previous methods, SPATE-GAN can indeed improve performance, generating more realistic synthetic data.

There is still a long way to go until, for example, deep learning methods outperform large numerical climate simulators. Still, studies like ours and many more from the physics-informed deep learning community highlight the potential of deep learning for modeling our planet at scale.

These studies are also humbling reminders, however, that we cannot just throw data into multi-billion parameter neural networks and hope for a good outcome. We must take a more balanced approach, combining expertise from the machine learning community with insights from relevant application domains.